Honeypot overview

Introduction

The Honeypot feature is designed to measure annotation quality by comparing human-generated labels against a trusted reference, known as honeypot labels (ground truth).

By introducing controlled ground truth data into your project, Honeypot enables you to quantitatively evaluate how closely annotators’ outputs align with expected results. The resulting Honeypot score reflects the level of agreement between a human label and the reference label, providing a reliable indicator of annotation accuracy and consistency across your workflow.

Key Principles

-

Step-based evaluation

Honeypot scoring is triggered at a specific step in your workflow. This allows you to evaluate quality precisely where it matters most—whether at the initial labeling stage or at a later review step where higher accuracy is expected. -

Centralized quality monitoring

Honeypot metrics are accessible to users responsible for overseeing project performance and quality:- Project Admins

- Team Managers

-

Ground truth via Honeypot labels

Ground truth is introduced through dedicated Honeypot labels, which are uploaded to selected assets and used as the reference for evaluation.

How to Activate Honeypot

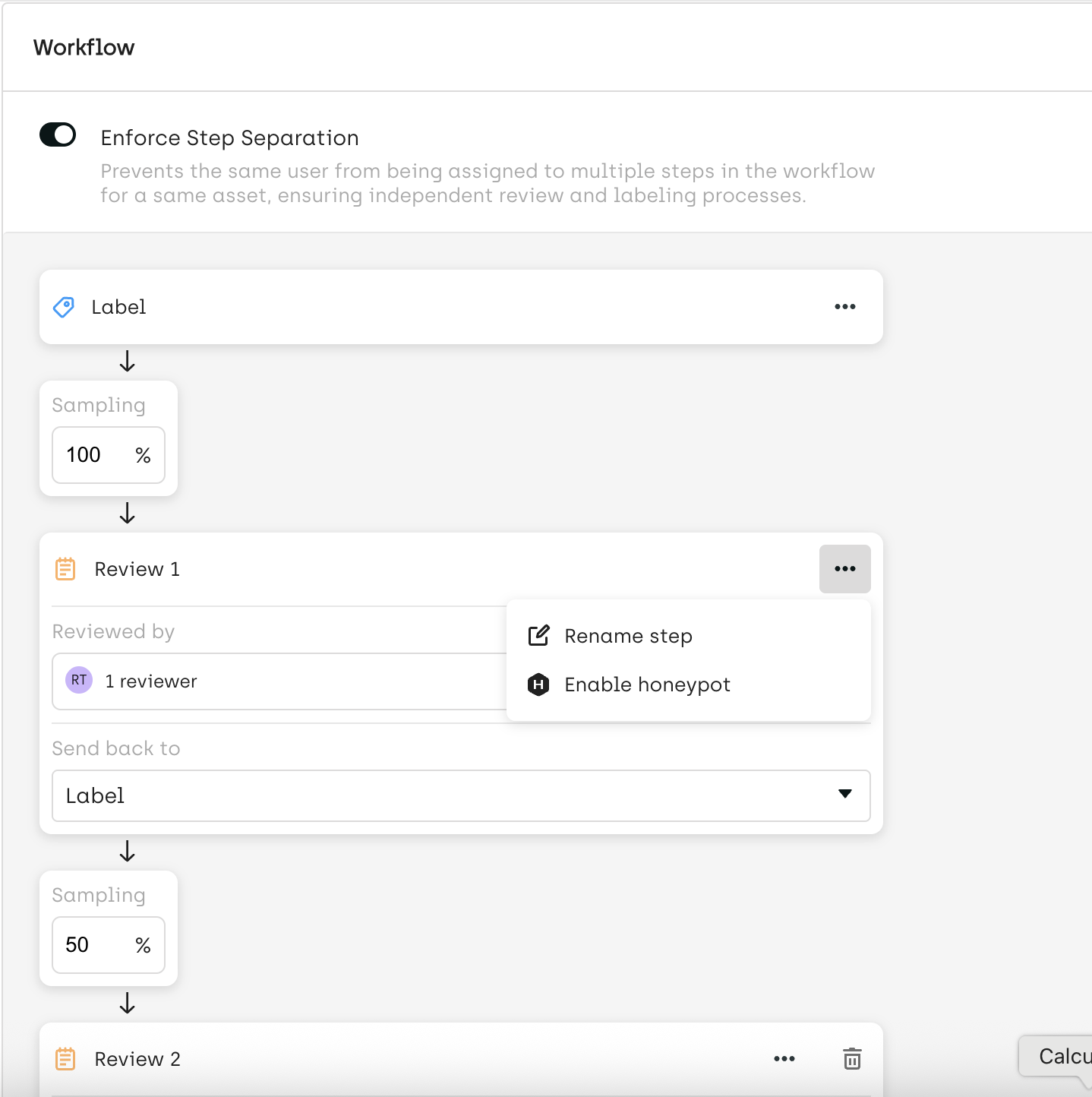

Step 1 — Configure Honeypot in Your Workflow

From the Workflow Editor:

- Right-click on the step where you want to evaluate quality

- Select “Enable Honeypot”

Note:

Honeypot can only be enabled on one workflow step per project.

Step 2 — Upload Your Honeypot Labels

Use the SDK to upload ground truth labels to selected assets using the kili.create_honeypot method.

You will need to provide:

asset_idorasset_external_idjson_response(the reference label)

This step defines the expected output against which human annotations will be evaluated.

Honeypot Execution

Once configured, Honeypot scoring is automatically triggered:

- When a label is submitted at the workflow step where Honeypot is enabled

- The system compares the submitted label with the corresponding Honeypot label

- A Honeypot score is computed based on predefined evaluation rules

How to View Honeypot Labels and Scores

Explore Interface

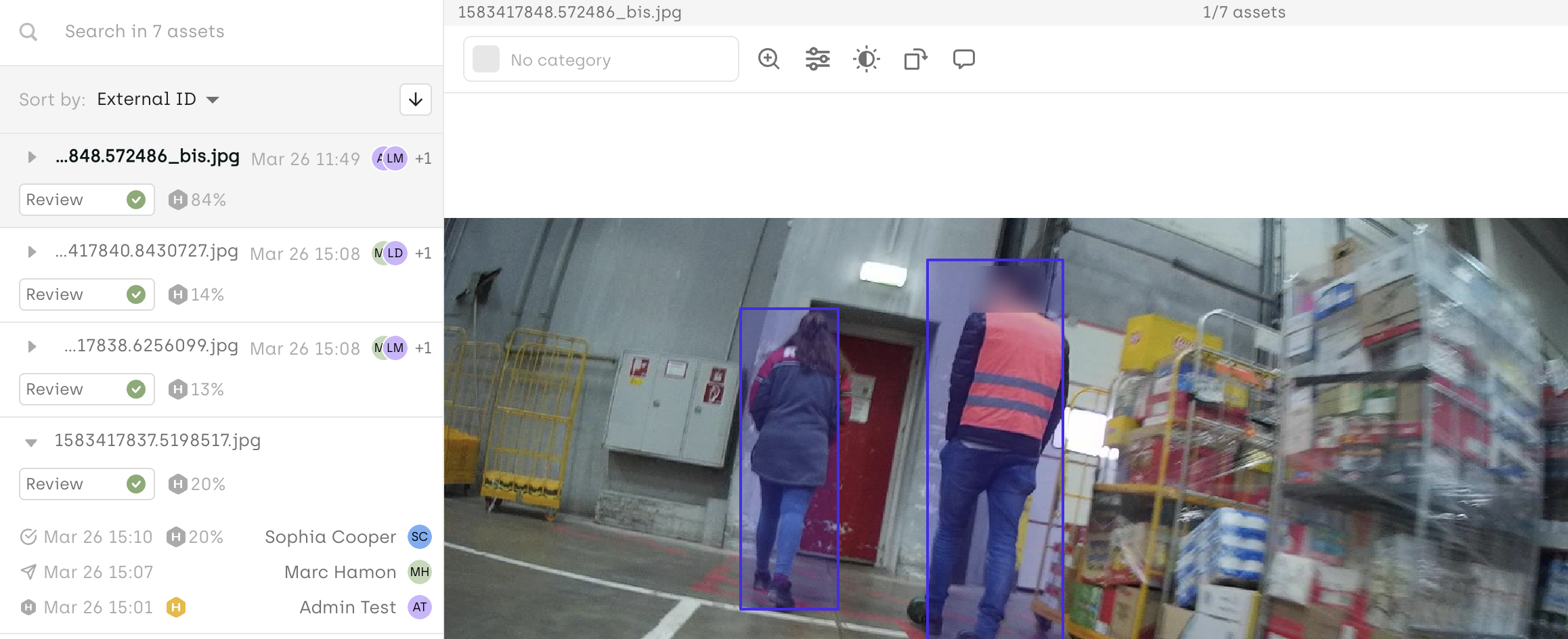

Project Admins and Team Managers can inspect Honeypot performance directly in the Explore interface:

- Honeypot assets are identifiable via a dedicated icon (see screenshot)

- You can visually compare:

- Human labels

- Honeypot (ground truth) labels

- The Honeypot score is displayed on labels associated with the step where Honeypot is enabled

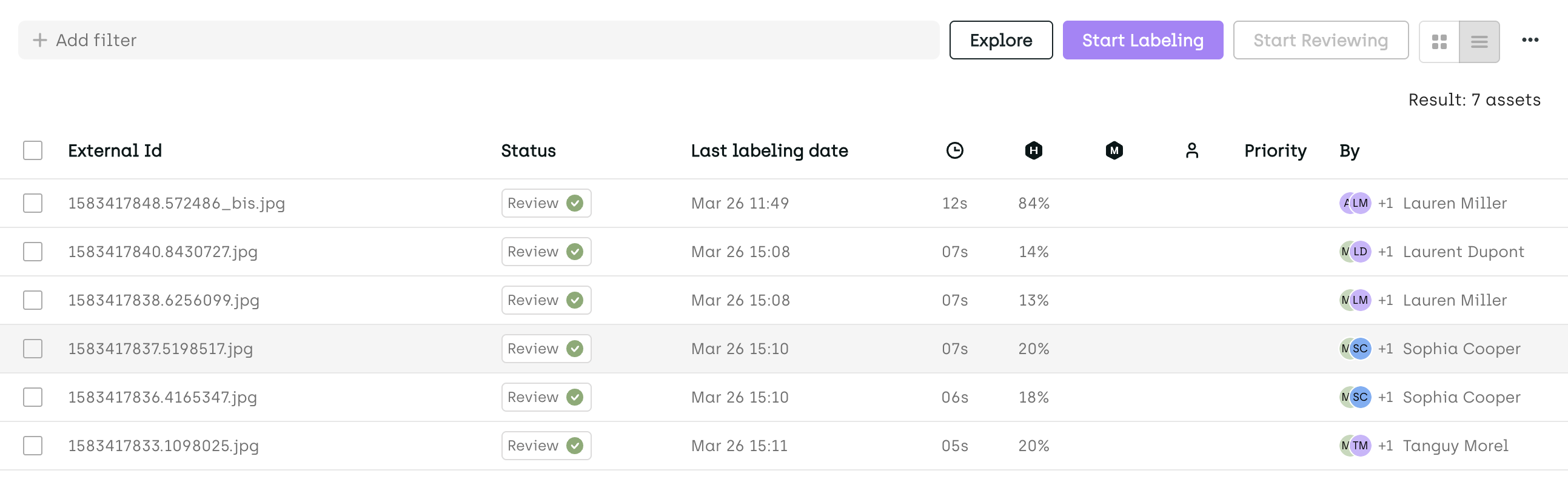

Queue Page

Honeypot scores are also visible in the Queue page, enabling quick access and filtering across assets.

Analytics Page

In the Analytics → Quality Insights section, you can:

- View aggregated Honeypot performance

- Analyze score distribution across project members

Calculation Rules

👉 Detailed calculation rules are available here

You can decide whether or not a specific labeling job is taken into account when calculating honeypot, by using the

isIgnoredForMetricsComputationssettings. For details, refer to Customizing the interface through json settings.

Updated about 2 months ago