Consensus overview

Introduction

The Consensus metric is designed to measure agreement between multiple annotators working on the same asset.

It is particularly useful for tasks involving ambiguity, subjectivity, or complex interpretation, where a single “correct” answer may not be sufficient. By collecting multiple independent annotations, Consensus helps you assess consistency across labelers and ensure alignment with project guidelines.

In Kili, Consensus relies on inter-annotator agreement, requiring a predefined number of labelers to annotate the same asset. Their outputs can then be compared, reviewed, and consolidated in subsequent workflow steps.

Typical use cases include:

- Evaluating annotation quality on subjective tasks (e.g., classification, NLP, or complex visual interpretation)

- Calibrating new projects or onboarding labelers

- Identifying ambiguous instructions or edge cases

- Ensuring consistency across large annotation teams

How to Set Up Consensus

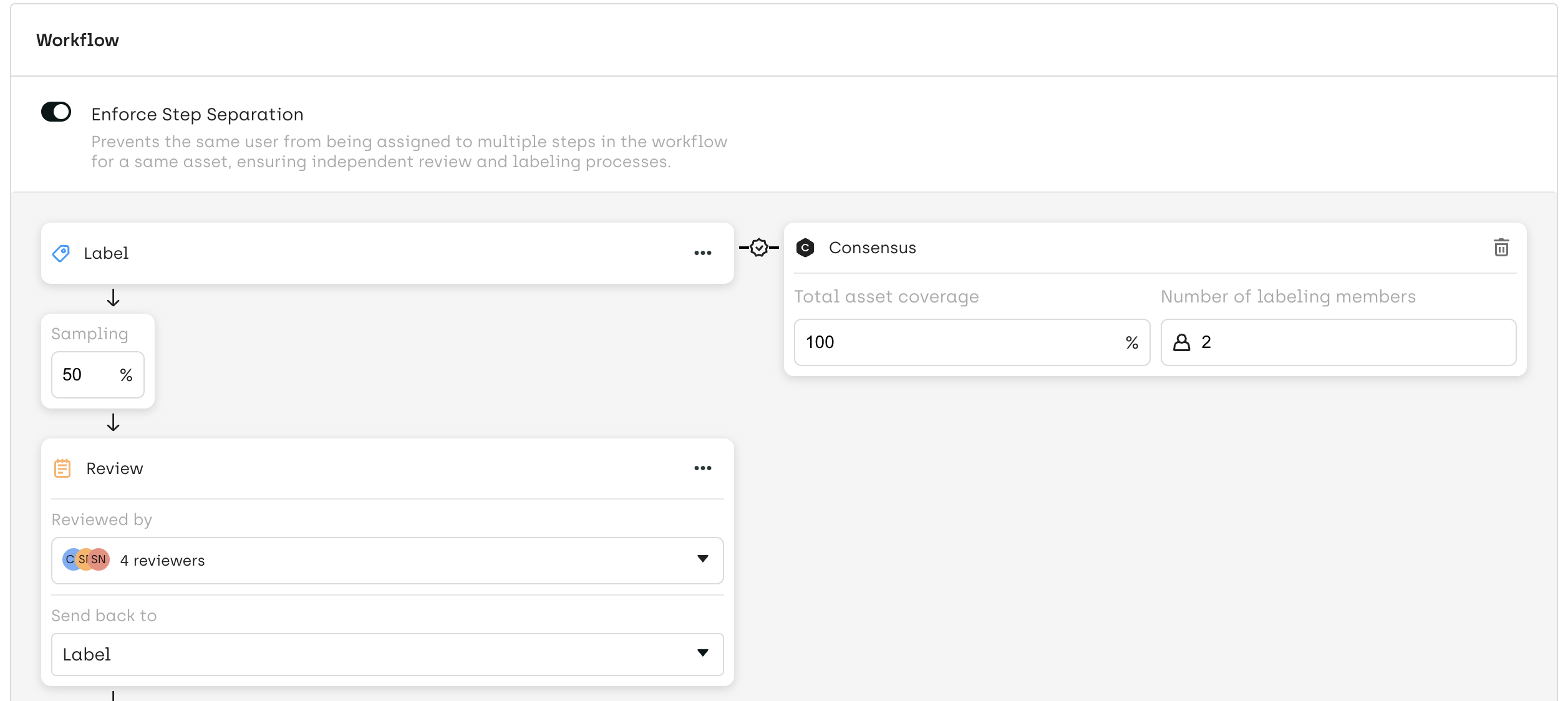

From the Workflow Editor:

- Right-click on the labeling step where you want to enable Consensus

- Select “Enable Consensus”

- Configure the following parameters:

- Number of labelers: how many annotators will work on the same asset

- Coverage (%): percentage of assets that will go through the Consensus process

Note:

Ensure that your team size and workload distribution can support the selected number of labelers per asset.

How Consensus Is Executed

When Consensus is enabled on a labeling step:

- Each asset is assigned to multiple labelers based on your configuration

- As labelers submit their annotations:

- The asset remains in a

Partially Donestatus until the required number of annotations is reached

- The asset remains in a

- Once all required labels are submitted:

- A Consensus score is automatically calculated, measuring the level of agreement between annotators based on predefined comparison rules

- The asset is marked as

Done - Or automatically transitions to the next step (e.g., Review), depending on your workflow configuration and sampling rules

This process ensures that multiple independent perspectives are collected before any validation or consolidation step.

Reviewing Consensus Labels

Once the asset reaches the Review stage, all submitted annotations are accessible for comparison in the Explore interface.

Reviewers can:

- Compare concurrent labels from multiple annotators

- Analyze differences and identify disagreements

- Decide on the appropriate next action

Available actions:

- Review

- Validates the result (with or without modifications)

- Creates a new consolidated label in the Review stage

- Send back

- Sends a selected label back to the labeling step for correction

- The asset moves back to the labeling step with a

Redostatus - Updated labels will be available again for review once corrections are submitted

This review process allows you to resolve disagreements, refine annotations, and continuously improve labeling quality.

Calculation Rules

You can decide whether or not a specific labeling job is taken into account when calculating consensus, by using the

isIgnoredForMetricsComputationssettings. For details, refer to Customizing the interface through json settings.

For calculation details, refer to Calculation rules for quality metrics

Updated 2 months ago